Online DEMO: https://hanslindetorp.github.io/WebAudioXML/

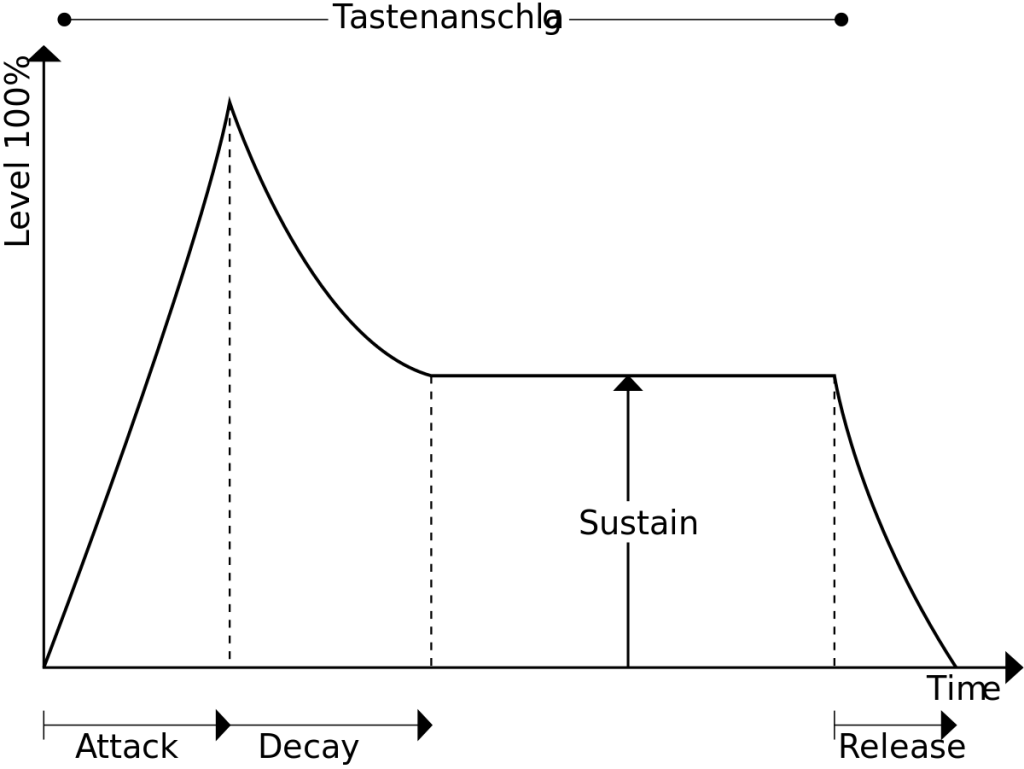

Since I first began formulating an XML for audio configuration which ended up in the WebAudioXML-project, I have come across a lot of interesting perspectives on music, audio and programming. One of the most profound thoughts recently is definitely about time and configuration which I mentioned briefly in the post about envelopes and compositions. As WebAudioXML developed from a language for audio configuration, it became clear that as soon as you enter an envelope object or even a time-related property as a frequency, you’re in the business of dealing with time.

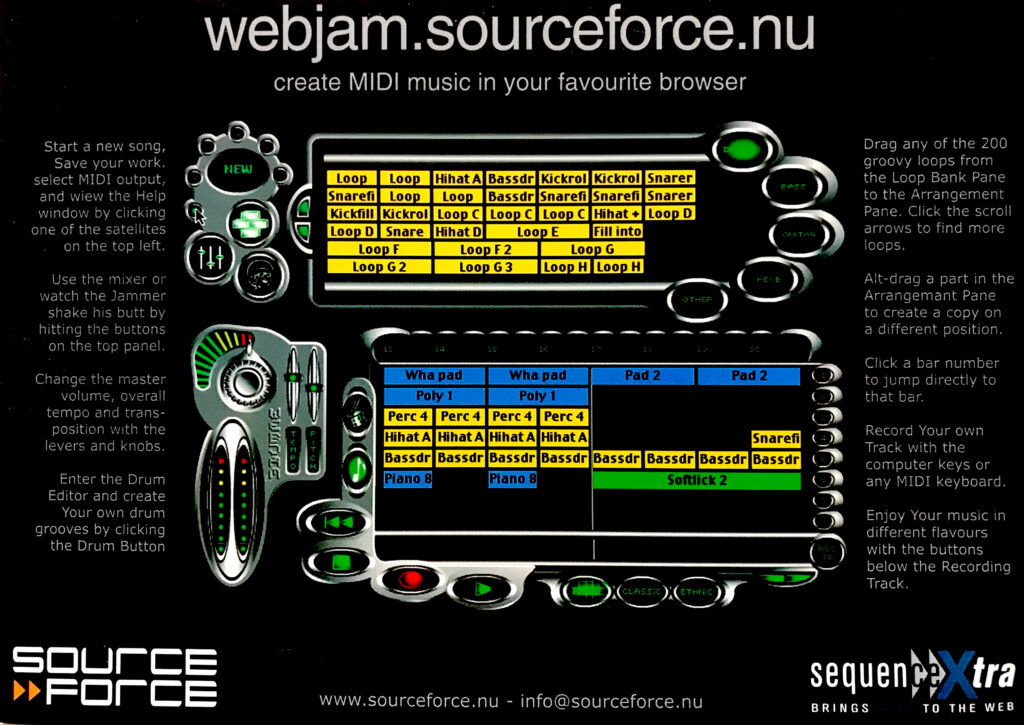

I have been looking at my two projects – WebAudioXML and iMusicXML as two different projects where iMusicXML deals with musical structures, like arrangements, tracks, regions, loops, motifs, leadins etc, and WebAudioXML takes care of the configuration aspect of the music production, like the volumes, filters, reverbs etc. But it doesn’t work. I’ll have to merge them. There are no clear borders between them.

An illustration of this is the latest default variable I added to the WebAudioXML – currentTime. It represents the time since WebAudioAPI was initialized in the web page and is constantly updated. By mapping this variable to pitches and frequencies using the <var>-element were are suddenly into making music using numbers in WebAudioXML. Oh no! (or: Hurray!) I found myself trapped in a Pure Data / MaxMPS – approach to music…