I have been working on two different XML-languages for a time, WebAudioXML and iMusicXML, with two well-distinguish targets. I thought. Until now. So my current question is: Is there really any good reason for treating a composition as a different type of object than an envelope? Or even a phase of a waveform? They are all carriers of information for the sound domain even if they span over quite different ranges of time. A phase of a waveform might be as short as 1ms, an envelope around one second and a composition rather one minute. But can anyone draw a distinct line between them? And what is the contribution if we manage?

This relates to what music actually is and I know people have been thinking about that for a long time, including John Cage with his famous composition of 4 min 33 sec of silence. So what’s my point of coming back to the topic?

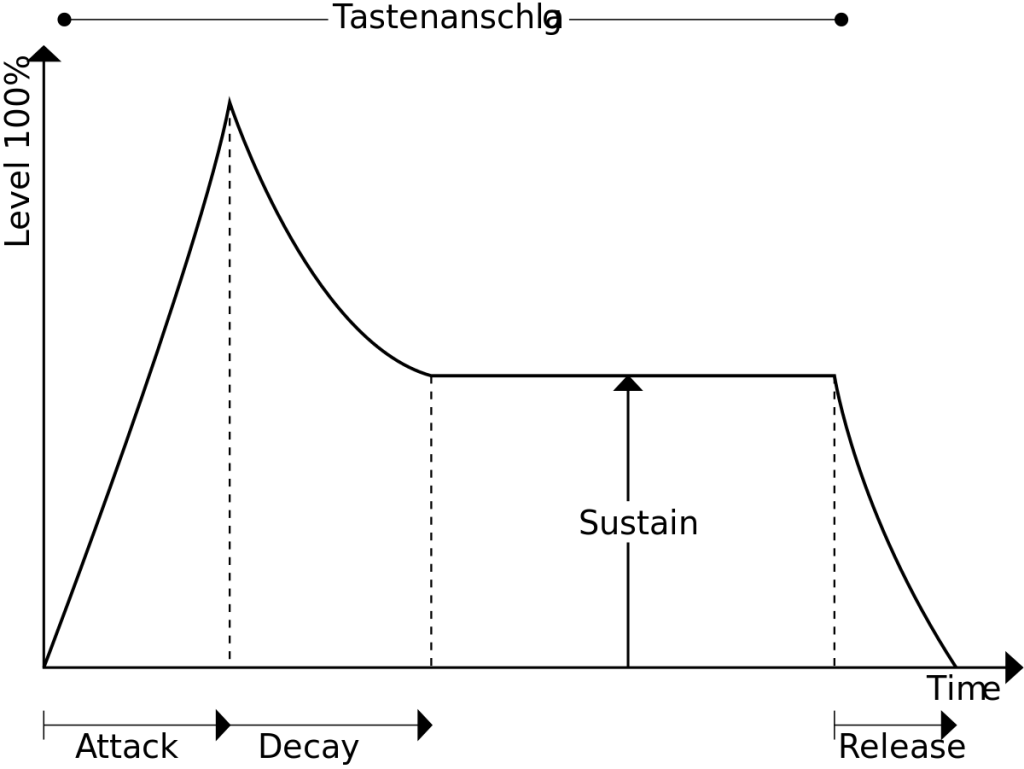

Well, it actually makes a difference when you are making up a language as I am. Is it one language or is it two? Should “arrangement”, “envelope” and “oscillator” be terms of the same language or different? I’m currently leaning towards the former and I think there are great potentials for doing so. If I am, I’m arguing that there are no real differences between an automation on a track in a DAW and an ADSR-envelope in a synthesizer. A melody and a vibrato are variations of the same phenomenon rather than something vastly different.

It’s all a composition. Simple or complex. Long or short.

Pingback: 3specify

Pingback: Time and Configuration – Hans Lindetorp Research